“Statistical confidence” is a frequent conversation that circulates in the CRO (Conversion Rate Optimization) testing community. How much statistical confidence do we need?… How should it be calculated?… How much data is required for the confidence to be valid?…

In this post, I will discuss the considerations we use at SmartSearch Marketing to answer some of these questions.

Seasonality

In broad terms, you could have statistical confidence with virtually any amount of data collected over any length of time – but how accurate will the data actually be? Time of day, day of week, week of month, even month of year all factor into testing results. We call this “seasonality”. Personally, I always run a test for a minimum of 14 days in order to eliminate the day-of-week impact, although running a test for at least a month is usually the norm with our website testing program.

The required minimum amount of time should be determined through an analysis of your visitors and overall conversion patterns and behaviors. For example, if you find after looking at this data that conversion rates are twice as high the second half of the month as the first half then you would want to run your tests at least a full 4 weeks to account for this trend.

Amount Data

How much data should you collect before concluding a test, regardless of what statistical confidence is telling you? You may hear people in the industry stating a specific number. For example: “you must have at least 100 conversions per variation…” Personally, I do not subscribe to this philosophy of setting a hard number because testing is as much a determination of the difference between variations as it is in reaching a certain volume of data.

What do I mean by this? Consider a test where variation A has 5 conversions and variation B has 95 conversions; neither has reached the presumed threshold, yet I am pretty confident that variation B will win. With a lower volume of data/conversions, you will absolutely have to run your testing program differently, for example you may have to accept that you will only be able to run A/B tests instead of A/B/N tests or multivariate tests – but low volume should not preclude you from testing at all. With our wide range of clients, some can reach 100 conversions for all variations in a matter of hours where as others may take months to reach that same volume, but both can have a successful and timely testing program.

Statistical Confidence

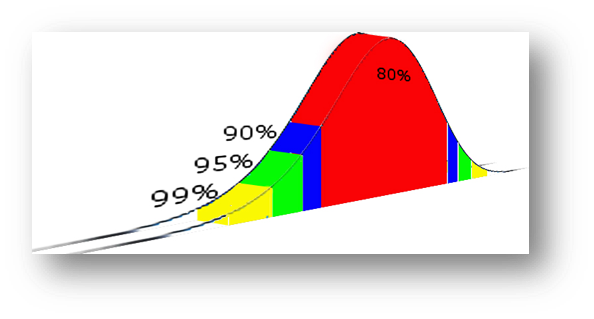

At what level of confidence do we conclude a test? 80%, 90%, 95%, 99%?

Some may pick a number and say you must always reach this level of confidence. I feel this is counterproductive. As much as we want to make this a scientific and empirical process, testing is still a series of unique situations that must be dealt with in the same fashion. Will a test really change that much once you have already reached 80% confidence? Absolutely, yes it can! Recently we had a test with variation B losing by 1% at 80% confidence and at by the time we got above 90% confidence, variation B was winning by 7.5%.

Of course higher statistical confidence is always better, but what if you can’t reach the level of statistical confidence that you have set for yourself? Do you just end the test as inconclusive and move on with the control? Even then you are effectively “picking a winner” (the control version), by default.

Rather than picking the winner based solely on reaching or not reaching a self-imposed threshold, we can use additional methods to come to a more logical conclusion. For example:

- Did we see one version consistently performing better over a long period of time?

- Is there an isolated anomaly we can see in the data that is skewing the results and would result in statistical confidence if it wasn’t included?

By digging further into the data we can find clues that give us a more accurate and effective picture of the test results.

Conclusion

These are just a few considerations when determining how you will run and conclude your tests. Every client and every testing situation will need their own answers to these questions. By logically working through these factors we can run effective and agile testing programs, that conclude in a reasonable amount of time, and drive real world business results!

Don’t Miss a Beat!

Receive current information, expert advice, helpful tips, and more…

* Your privacy is important to us.